|

Kubernetes ComponentsĪ Kubernetes deployment includes a cluster consisting of one or several worker nodes that run containerized applications. Users only need to provide their physical specifications and number of nodes, and the cluster can be scaled up and down automatically. Cloud infrastructure such as Google Cloud and AWS help automate cluster management.

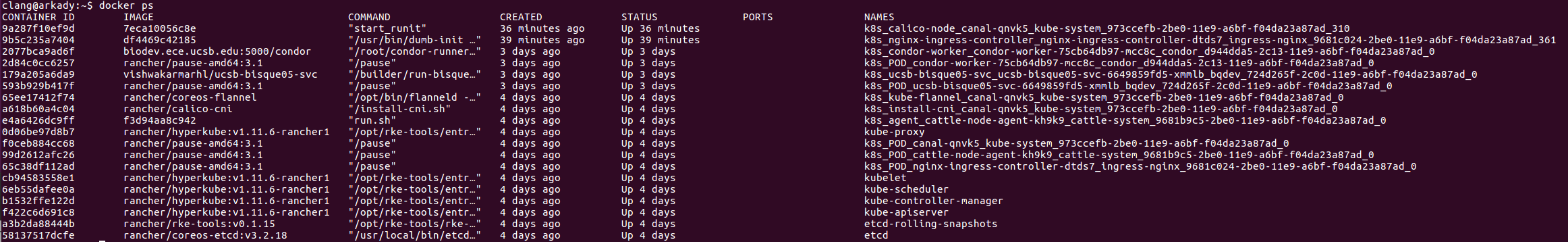

When you add nodes to this “node pool” to scale out the cluster, workloads are rebalanced across the new nodes. A cluster contains a Kubernetes control plane and nodes that provide memory and compute resources. ClusterĪ cluster is a collection of all the above components. You can perform operations via command-line scripts or HTTP-based API calls. This component controls the interaction between Kubernetes and your applications. Control PlaneĪdministrators and users can access Kubernetes through the control plane to manage nodes and workloads. These components include a container runtime, kube-proxy, and kubelet. Each Kubernetes node has services to create runtime environments and support pods. Nodes contain the services required to run pods, communicate with master components, configure networking, and run assigned workloads. NodeĪ Kubernetes node is a logical collection of IT resources that supports one or more containers. A service acts as a trusted endpoint for communication between components or applications. The service is assigned a virtual IP address called clusterIP and holds it until it is explicitly destroyed. Instead, it connects to the service and relays it to the currently running, connected Pod. This means that Pods that need to communicate with other Pods cannot rely on the IP address of a single primary Pod. Kubernetes guarantees the availability of specific pods and replicas, but does not guarantee the uptime of individual pods. The need for a Service stems from the fact that pods in Kubernetes are temporary and can be replaced at any time. The service is responsible for maintaining access policies and enforcing these policies for incoming requests. In Kubernetes, a Service is an entity that represents a set of pods that run an application or functional component. The Deployment adds or removes pods to maintain the desired application state, and tracks pod state to ensure optimal deployment. It also specifies a policy for distributing updates. Users need to define replication settings, specifying how the pod should be replicated on Kubernetes nodes.Ī Deployment object specifies the default number of clones Kubernetes should create of the same pod. DeploymentĪ Deployment allows users to specify the scale at which applications should run. However, Kubernetes also supports a persistent storage mechanism called PersistentVolumes. This means that if you replace or destroy a pod, its data is lost. Many processes work together, sharing the same amount of computing resources.īy default, pods work with temporary storage. This is useful for more complex workload configurations. Multi-container pods, on the other hand, make deployment configuration easier, because they can automatically share resources between containers.

In some cases, a pod can contain a single container. Resources such as storage and RAM are shared by all containers in the same pod, so they can run as one application. Each pod has an IP address shared by all containers. Let’s review some of the fundamental concepts of a Kubernetes environment.Ī pod is a set of containers, the smallest architectural unit managed by Kubernetes. Understanding the Kubernetes Architecture

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed